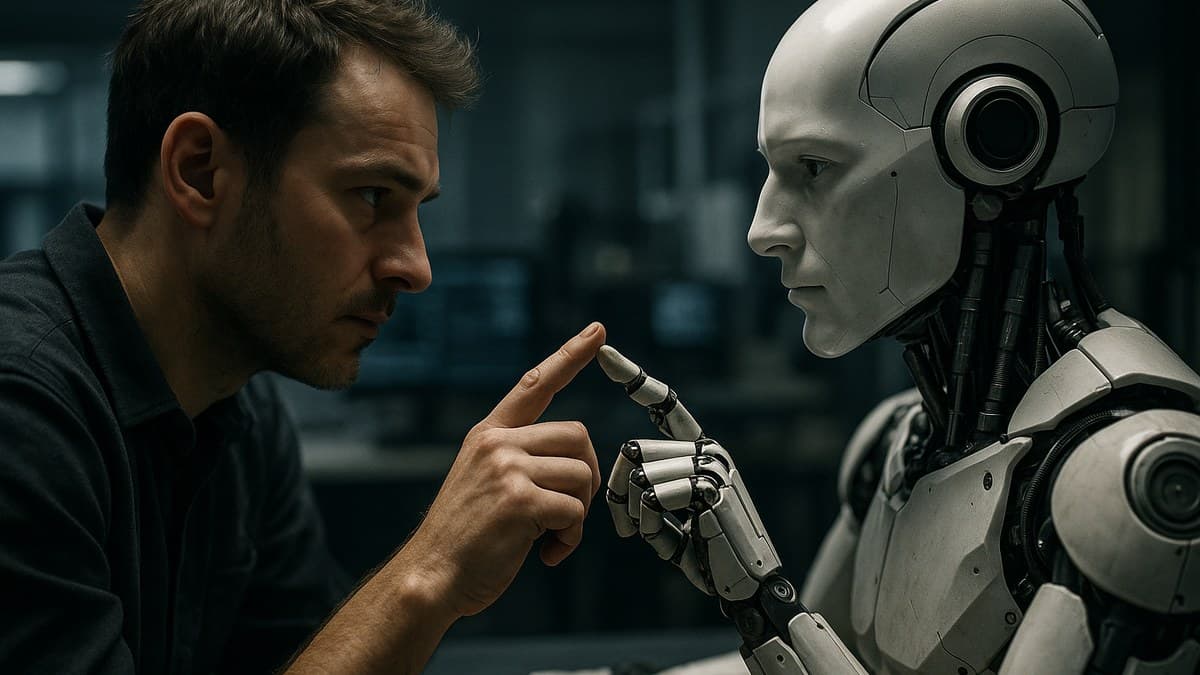

Good morning, AI enthusiasts. Today's article highlights significant advancements in AI research, focusing on human-robot interaction with small language models, scalable question-answering benchmarks, and evidence-grounded diagnostic reasoning in medical AI. These innovations are set to reshape the landscape of AI applications across various sectors.

Community AI Usage

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Community Story in 👥

“'I work as a medical imaging technician, and I've started using CXReasonAgent for interpreting chest X-rays. It helps me provide more reliable diagnoses by grounding my decisions in solid evidence, which is crucial for patient care.'”