AI Research Papers Ranked by Startup Potential

ScienceToStartup

Tracking 126 AI research papers today, ranked by startup viability.

How It Works

ScienceToStartup

Research Intelligence

Search, deep-analyze, and generate reports from cs.AI research

Explore the Research Landscape

Navigate today's AI research clusters by topic and viability.

Daily Market Intelligence

Real-time signals distilled from today's most impactful papers.

Daily Snapshot

range [Mar 4]Trending Today

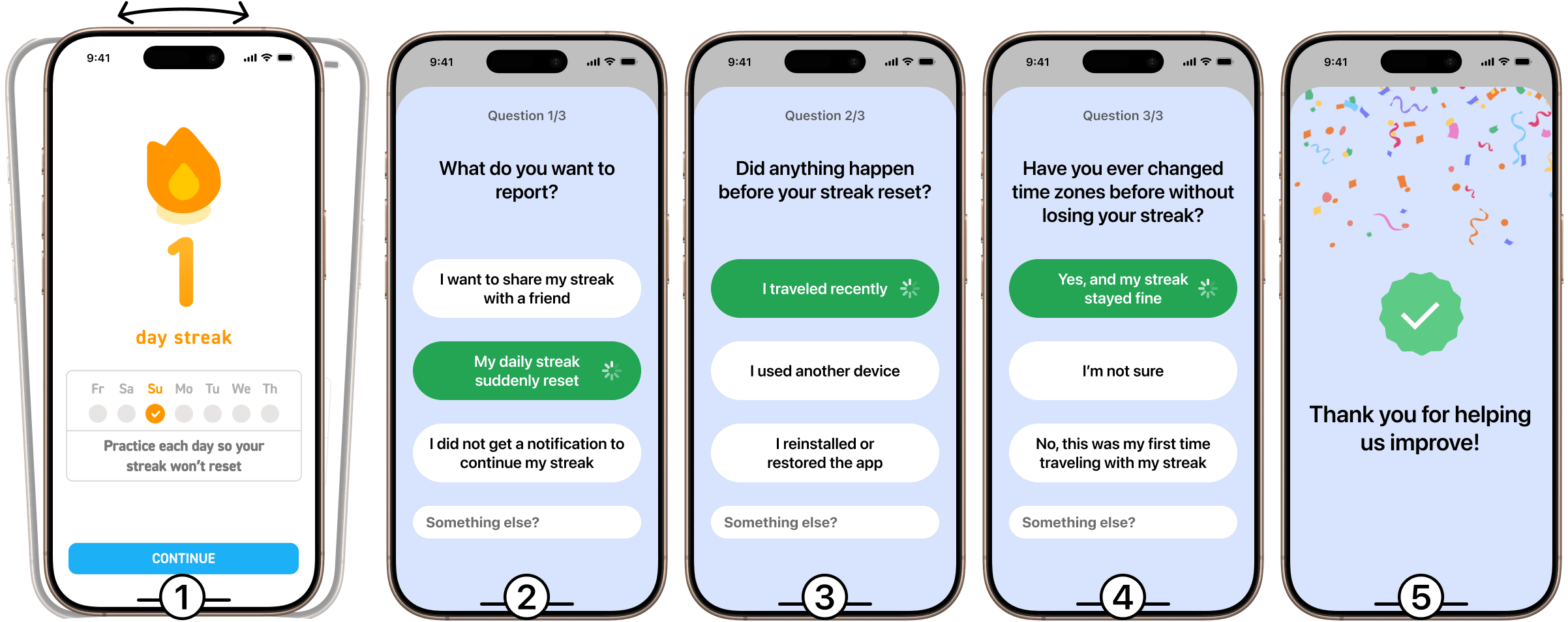

FeedAIde enriches mobile app feedback by guiding users with smart follow-up questions, improving interaction between developers and users through context-aware AI.

Why It Matters

The research addresses a key challenge in mobile app development: the gap between user-submitted feedback and developer needs. By enriching user feedback with context-aware follow-up questions, it reduces clarification cycles and enhances the quality of information developers receive.

Science

The paper describes FeedAIde, a system that asks app users intelligent follow-up questions to gather rich feedback. It integrates multimodal large language models to analyze context data like screenshots and user interaction logs to generate relevant queries, thereby refining feedback into detailed reports for developers.

Key Figures

Use Case Idea

An API tool for mobile app developers to integrate into their apps, enabling automatic enhancement of user feedback, leading to better bug reports and feature requests without additional user effort.

Product Angle

FeedAIde can be commercialized as a modular SDK for mobile apps, enabling app developers to plug in advanced feedback capture technology without extensive customization.

Product Opportunity

Developers in the mobile application industry need effective feedback management tools to improve app quality and user satisfaction; hence, app platforms, developers, and enterprises focusing on customer experience are potential customers.

Disruption

FeedAIde could replace traditional feedback forms in mobile apps that often produce vague, incomplete, or unstructured user responses.

Method & Eval

The FeedAIde iOS framework was tested with users of a gym app. They submitted feedback using both FeedAIde and a simple form, then evaluated through expert assessment of 54 feedback reports, showing improvement in feedback quality.

Caveats

The system's dependency on device information could raise privacy concerns, and high initial setup cost and integration effort for app developers might hinder adoption.

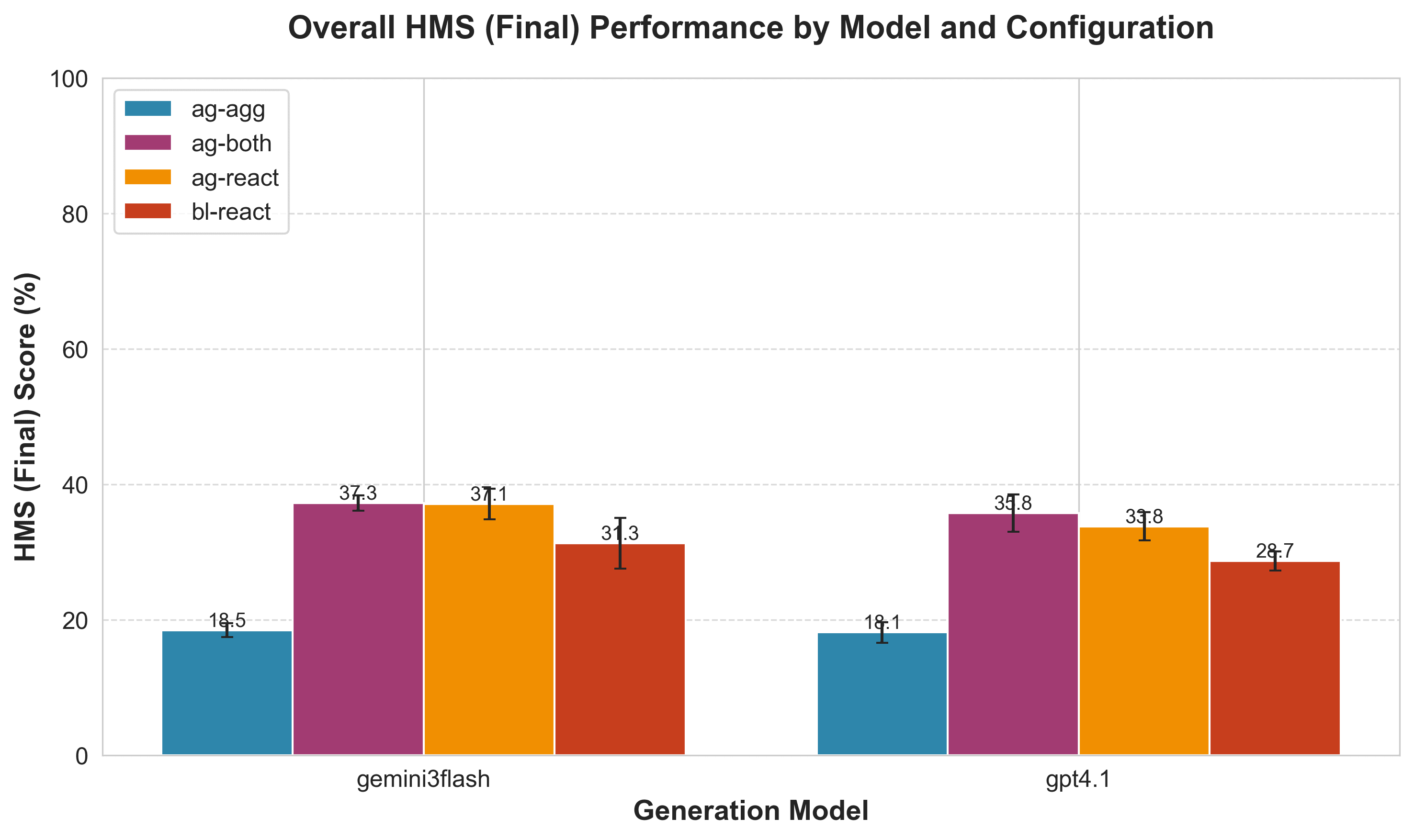

Agentics 2.0 is a Python framework enabling reliable and scalable agentic data workflows with logical transduction algebra.

Why It Matters

This research matters because it provides a structured method for creating reliable and scalable agentic AI workflows which are crucial for transitioning AI from research to production in enterprise settings.

Science

Agentics 2.0 utilizes a programming model that transforms LLM inferences into typed, composable functions that enforce schema validity and facilitate asynchronous, parallel processing. This model is based on logical transduction algebra which converts LLM inference into typed semantic transformations called transducible functions.

Key Figures

Use Case Idea

Enterprise data automation in industries requiring reliable AI workflows, such as finance for credit risk assessment and administration for automated document processing.

Product Angle

To productize Agentics 2.0, transform it into a robust enterprise solution with integrations into existing data processing tools and platforms, emphasizing its reliability and scalability features.

Product Opportunity

The market includes companies transitioning to AI for data processing, particularly those needing reliability and observability in their workflows. Companies in finance, healthcare, and logistics could pay for a more reliable AI solution in their data workflows.

Disruption

Agentics 2.0 could replace less reliable AI workflow automation tools that do not offer strong typing or semantic observability, which are critical for enterprise-scale deployment.

Method & Eval

Agentics 2.0 was tested on challenging benchmarks like DiscoveryBench and Archer, demonstrating state-of-the-art performance, particularly in data-driven discovery and NL-to-SQL semantic parsing tasks.

Caveats

The success of Agentics 2.0 depends on its integration simplicity with existing systems and the ability of users to effectively adapt to its programming model, which could be complex for teams not familiar with functional or typed paradigms.

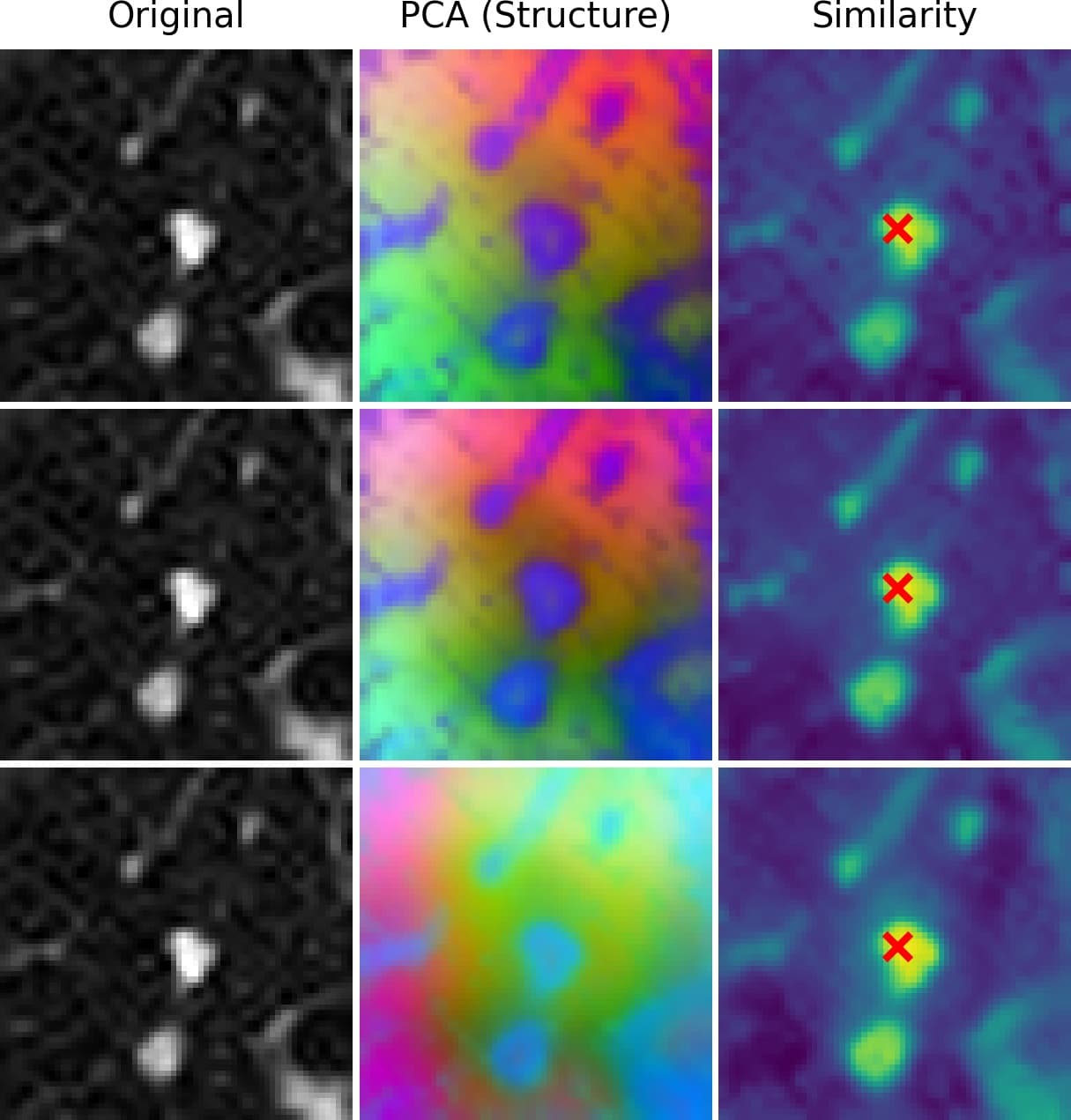

Develop 3D-enabled AI models from existing 2D models without retraining, leveraging PlaneCycle's adapter-free technology.

Why It Matters

This research allows leveraging vast existing investments in 2D foundation models by enabling them to handle 3D data without retraining, thus saving resources and time especially critical in fields like medical imaging.

Science

PlaneCycle cyclically distributes spatial aggregation across different planes using the original 2D backbone, allowing 3D fusion without architectural modifications or retraining, preserving pretrained 2D model biases.

Key Figures

Use Case Idea

Adapt medical imaging models to efficiently process CT and MRI scans without needing to develop entirely new 3D models, saving time and computational resources.

Product Angle

Develop an API that enables existing AI models to extend their capabilities to 3D datasets, primarily targeting industries with volumetric data requirements such as healthcare and scientific research.

Product Opportunity

As medical imaging and similar fields increasingly rely on 3D data processing, the ability to retrofit existing 2D models creates a significant market, potentially a multi-billion dollar industry as it affects major imaging device manufacturers and healthcare systems.

Disruption

It replaces the need for specialized 3D models or adapting 2D models with additional computational layers, offering a streamlined process for enabling 3D capability.

Method & Eval

The method was evaluated on six 3D classification and three 3D segmentation tasks, showing superior performance over traditional methods both in zero-training and fine-tuning conditions, achieving close to fully trained 3D models.

Caveats

Performance could vary with model architecture; although promising, results depend on the compatibility of existing 2D models with PlaneCycle's method.

Intelligence at Scale

Every paper analyzed, scored, and ranked for opportunity.

Intelligence Feed(3 of 123)

Not All Candidates are Created Equal: A Heterogeneity-Aware Approach to Pre-ranking in Recommender Systems

Most large-scale recommender systems follow a multi-stage cascade of retrieval, pre-ranking, ranking, and re-ranking. A key challenge at the pre-ranking stage arises from the heterogeneity of training instances sampled from coarse-grained retrieval results, fine-grained ranking signals, and exposure feedback. Our analysis reveals that prevailing pre-ranking methods, which indiscriminately mix heterogeneous samples, suffer from gradient conflicts: hard samples dominate training while easy ones remain underutilized, leading to suboptimal performance. We further show that the common practice of uniformly scaling model complexity across all samples is inefficient, as it overspends computation on easy cases and slows training without proportional gains. To address these limitations, this paper presents Heterogeneity-Aware Adaptive Pre-ranking (HAP), a unified framework that mitigates gradient conflicts through conflict-sensitive sampling coupled with tailored loss design, while adaptively allocating computational budgets across candidates. Specifically, HAP disentangles easy and hard samples, directing each subset along dedicated optimization paths. Building on this separation, it first applies lightweight models to all candidates for efficient coverage, and further engages stronger models on the hard ones, maintaining accuracy while reducing cost. This approach not only improves pre-ranking effectiveness but also provides a practical perspective on scaling strategies in industrial recommender systems. HAP has been deployed in the Toutiao production system for 9 months, yielding up to 0.4% improvement in user app usage duration and 0.05% in active days, without additional computational cost. We also release a large-scale industrial hybrid-sample dataset to enable the systematic study of source-driven candidate heterogeneity in pre-ranking.

ZipMap: Linear-Time Stateful 3D Reconstruction with Test-Time Training

Feed-forward transformer models have driven rapid progress in 3D vision, but state-of-the-art methods such as VGGT and $π^3$ have a computational cost that scales quadratically with the number of input images, making them inefficient when applied to large image collections. Sequential-reconstruction approaches reduce this cost but sacrifice reconstruction quality. We introduce ZipMap, a stateful feed-forward model that achieves linear-time, bidirectional 3D reconstruction while matching or surpassing the accuracy of quadratic-time methods. ZipMap employs test-time training layers to zip an entire image collection into a compact hidden scene state in a single forward pass, enabling reconstruction of over 700 frames in under 10 seconds on a single H100 GPU, more than $20\times$ faster than state-of-the-art methods such as VGGT. Moreover, we demonstrate the benefits of having a stateful representation in real-time scene-state querying and its extension to sequential streaming reconstruction.

Low-Resource Guidance for Controllable Latent Audio Diffusion

Generative audio requires fine-grained controllable outputs, yet most existing methods require model retraining on specific controls or inference-time controls (\textit{e.g.}, guidance) that can also be computationally demanding. By examining the bottlenecks of existing guidance-based controls, in particular their high cost-per-step due to decoder backpropagation, we introduce a guidance-based approach through selective TFG and Latent-Control Heads (LatCHs), which enables controlling latent audio diffusion models with low computational overhead. LatCHs operate directly in latent space, avoiding the expensive decoder step, and requiring minimal training resources (7M parameters and $\approx$ 4 hours of training). Experiments with Stable Audio Open demonstrate effective control over intensity, pitch, and beats (and a combination of those) while maintaining generation quality. Our method balances precision and audio fidelity with far lower computational costs than standard end-to-end guidance. Demo examples can be found at https://zacharynovack.github.io/latch/latch.html.

Frequently Asked Questions

Platform

ScienceToStartup is an AI-powered research intelligence platform that discovers which AI research papers could become the next breakthrough startup. We analyze papers from arXiv daily and rank them by commercial viability using our proprietary Signal Fusion algorithm.

We use our Signal Fusion algorithm that combines four signals: a GPT-4o viability score (1–10), community unicorn probability predictions, GitHub star velocity, and citation momentum. The composite score surfaces the papers with the highest commercial startup potential.

Yes. The core dashboard, paper analysis, topic pages, and research trends are completely free. We offer enterprise features like TTO dashboards, scout reports, and API access for institutional users.

Papers are ingested daily from arXiv. Viability scores are computed on ingestion. GitHub stars and citation counts update daily. Topic summaries regenerate weekly. Articles are published daily based on news analysis.

The Viability Score (1–10) measures how likely an AI paper is to become a fundable startup, based on code availability, author commercialization track record, market timing, and competitive landscape.